This is a small practical article about my development setup for Windows and Linux. I’m usually developing cross platform, because it doesn’t add much work and I’m switching from time to time between those systems. Both of them have advantages and disadvantages for me, but I don’t want to go into details here.

For version control I use Mercurial along with bitbucket. I prefer Mercurial over git for a simple reason, there is a very good open source GUI client for both operating systems, namely TortoiseHG. For git there are a few Windows clients, but I didn’t find any Linux one that I liked. Bitbucket is cool, because they provide the usual code hosting plans, mercurial and git hosting, optional bug tracker, wiki, code browsing and more.

The next thing is a cross platform build system: CMake. Most Linux and cross platform IDEs support it and it’s easy to generate a Visual Studio project out of it. Here is the code for the build file of my latest project. It includes OpenGL, CUDA and some other libraries. You can use it as a template.

project(firefly)

#this is my project structure

# ~/firefly/src/CMakeLists.txt (this file)

# ~/firefly/src/main.cpp (and other source files, there are also subdirectories)

# ~/firefly/data/.. (shaders, music, model data..)

# ~firefly/linlib/lib/.. (linux libs not installed on the system, usually .so and .a files)

# ~firefly/winlib/lib/.. (windows libs not installed on the system, usually .lib and .a files)

# ~/firefly/*inlib/include/.. (header files libs not installed on the system)

# ~/firefly/build/.. (not committed to the version control system, names vary, created by cmake.

.dll files need to by copied here, there must be a cmake command which could do that but I did it manually)

# ~/firefly/documentation/.. (optional documentation, reports for uni etc.)

cmake_minimum_required(VERSION 2.6)

cmake_policy(SET CMP0015 OLD)

#find_package(Qt4 REQUIRED)

find_package(OpenGL REQUIRED)

find_package(CUDA REQUIRED)

#include_directories(${CMAKE_SYSTEM_INCLUDE_PATH} ${QT_INCLUDES} ${CMAKE_CURRENT_BINARY_DIR} ${CMAKE_CURRENT_SOURCE_DIR})

include_directories(${CMAKE_CURRENT_BINARY_DIR} ${CMAKE_CURRENT_SOURCE_DIR} ../include)

if(CMAKE_SYSTEM_NAME STREQUAL "Linux")

link_directories(/usr/lib /usr/local/lib ../linlib/lib)

# otherwise some bullet internal headers don't find friends..

include_directories(/usr/local/include/bullet /usr/include/bullet ${CMAKE_CURRENT_SOURCE_DIR}/../linlib/include /usr/local/cuda/include)

else()

#windows

include_directories(${CMAKE_CURRENT_SOURCE_DIR}/../winlib/include ${CMAKE_CURRENT_SOURCE_DIR}/../winlib/include/bullet)

link_directories(${CMAKE_CURRENT_SOURCE_DIR}/../winlib/lib)

endif()

set(project_SRCS

#list all source and header files here. separate files either by spaces or newlines

main.cpp

Class.cpp Class.h

..

)

#shaders, optional, will be shown in the IDE, but not compiled

file(GLOB RES_FILES

../data/shader/Filename.frag

../data/shader/Filename.vert

)

#set(CUDA_NVCC_FLAGS ${CUDA_NVCC_FLAGS} -O3 --use_fast_math -gencode arch=compute_20,code=sm_21 --maxrregcount 32)

#set(CUDA_NVCC_FLAGS ${CUDA_NVCC_FLAGS} "--use_fast_math -gencode arch=compute_20,code=sm_20 -lineinfo -G") #-G for cuda debugger

#set(CUDA_NVCC_FLAGS ${CUDA_NVCC_FLAGS} "--use_fast_math -gencode arch=compute_20,code=sm_21 -lineinfo")

set(CUDA_NVCC_FLAGS ${CUDA_NVCC_FLAGS} "--use_fast_math -gencode arch=compute_20,code=sm_21 -lineinfo --maxrregcount 32")

if(CMAKE_SYSTEM_NAME STREQUAL "Linux")

#"-D VIENNA_DEBUG" defines the preprocessor variable VIENNA_DEBUG

# set(CMAKE_CXX_FLAGS "-D VIENNA_DEBUG -D VIENNA_LINUX -std=c++11")

set(CMAKE_CXX_FLAGS "-D VIENNA_LINUX -std=c++11")

set(CUDA_NVCC_FLAGS ${CUDA_NVCC_FLAGS} " -std=c++11")

else()

#"/DVIENNA_DEBUG" defines the preprocessor variable VIENNA_DEBUG

#add_definitions(/DVIENNA_DEBUG)

add_definitions(/DVIENNA_WINDOWS)

SET( CMAKE_EXE_LINKER_FLAGS "${CMAKE_EXE_LINKER_FLAGS}" )

endif()

#qt4_automoc(${project_SRCS})

#add_executable(firefly ${project_SRCS})

cuda_add_executable(firefly ${RES_FILES} ${project_SRCS})

if(CMAKE_SYSTEM_NAME STREQUAL "Linux")

set(LIBS ${LIBS} X11 Xxf86vm Xi GL glfw3 GLEW Xrandr pthread assimp BulletDynamics BulletCollision LinearMath fmodex64 freeimage gsl gslcblas ${CUDA_curand_LIBRARY})

target_link_libraries(firefly ${LIBS})

else()

set(LIBS ${LIBS} OpenGL32 glfw3 GLEW32 assimp fmodex64_vc FreeImage gsl cblas ${CUDA_curand_LIBRARY})

target_link_libraries(firefly ${LIBS} debug BulletDynamics_Debug debug BulletCollision_Debug debug LinearMath_Debug)

target_link_libraries(firefly ${LIBS} optimized BulletDynamics optimized BulletCollision optimized LinearMath)

target_link_libraries(firefly ${LIBS} general BulletDynamics general BulletCollision general LinearMath)

endif()

And lastly you’ll need cross platform libraries. Fortunately there are quite good ones.

- For GUI I use Qt, it can also open an OpenGL window, but if you don’t need GUI, it would be overkill.

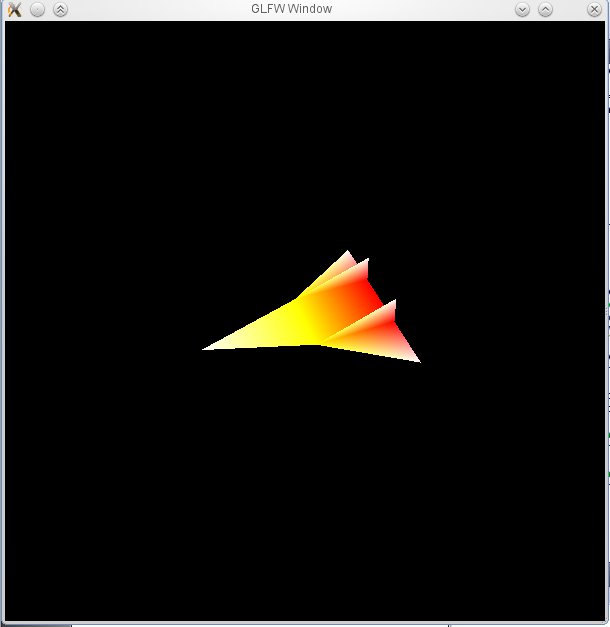

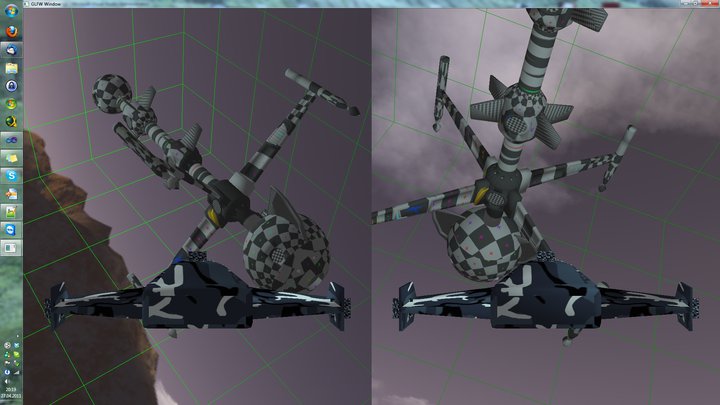

- So for Chawah and Firefly I used GLFW, which simply opens a window and provides mouse and keyboard input.

- Then you need an OpenGL extension loader, I used GLEW, but there are also other alternatives.

- For math (everything connected to vectors and matrices) I can recommend GLM.

- Assimp loads meshes, objects, animations and whole scene graphs, but it’s a bit buggy in certain areas and lacks certain features (only linear animations afaik, only simple materials and only basic lights (no area lights)). I didn’t find anything better though.

- Then there is FreeImage which is a very easy to use image loader and exporter.

- You can also check out the other libs from the CMake file..

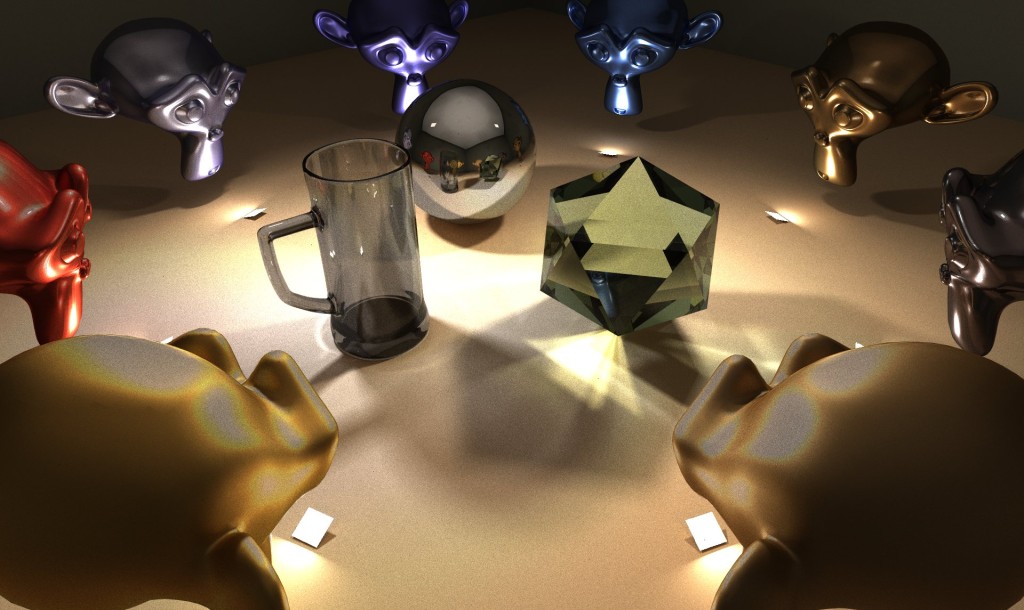

In case you want a working example, just download the firefly project. The project is quite big, I made a post about it. If you have any questions, then I’ll answer them in the comments..