This post is about a demo called Firefly for a course in Vienna University of Technology. The goal was the create a graphics demo, meaning a program with 3d animation which runs in real time, without user interaction, for few minutes and shows interesting graphic effects.

This is what I got (there is HD): Watch on YouTube

Notice that there are only two traditional lights (lamps). The arcade is mostly lit by indirect light, the cubes are shining from every point on the surface.

And I’m quite proud that I won the first place in the course’s contest :D

Now that you’ve seen the video, I’d like to talk a bit about the technology. In the end there is a conclusion, which you shouldn’t skip. Finally there is information on how to retrieve the code and executables.

Technology

I implemented a deferred rendering system using OpenGL and CUDA. The bouncing cubes are computed with Bullet by simply applying random forces. There are two main spot lights computed via shadow maps, indirect light is computed via ray tracing.

All geometry and texture information is rendered into a buffer on the GPU using OpenGL. The shadow maps are also rendered by OpenGL. Until here it is a standard deferred rendering system and therefore I’ll skip the details. Those buffers are then passed to CUDA, where the shading happens. This shading stage is divided into several CUDA kernels.

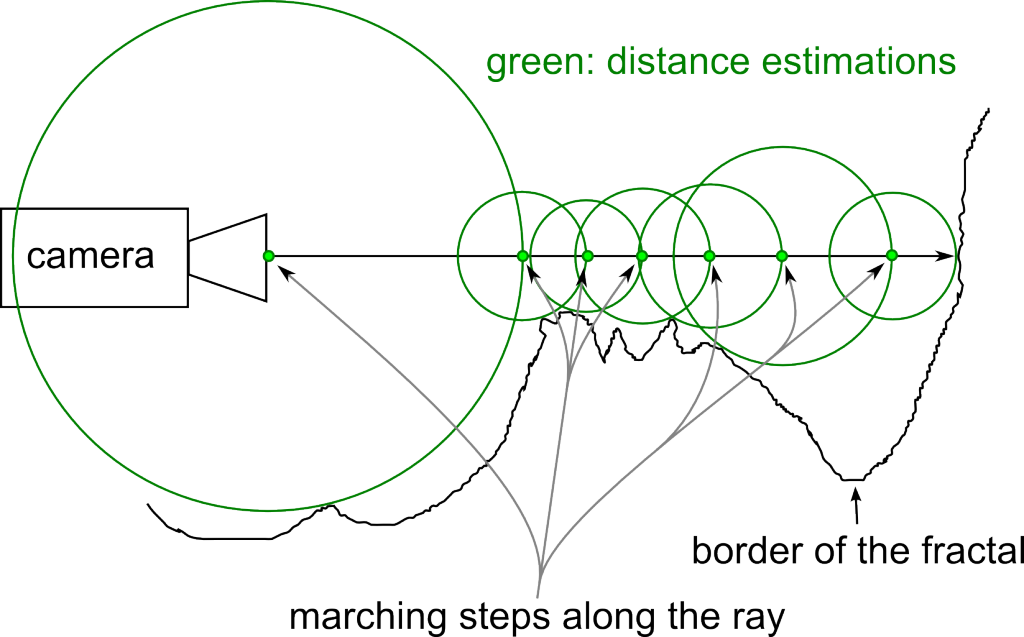

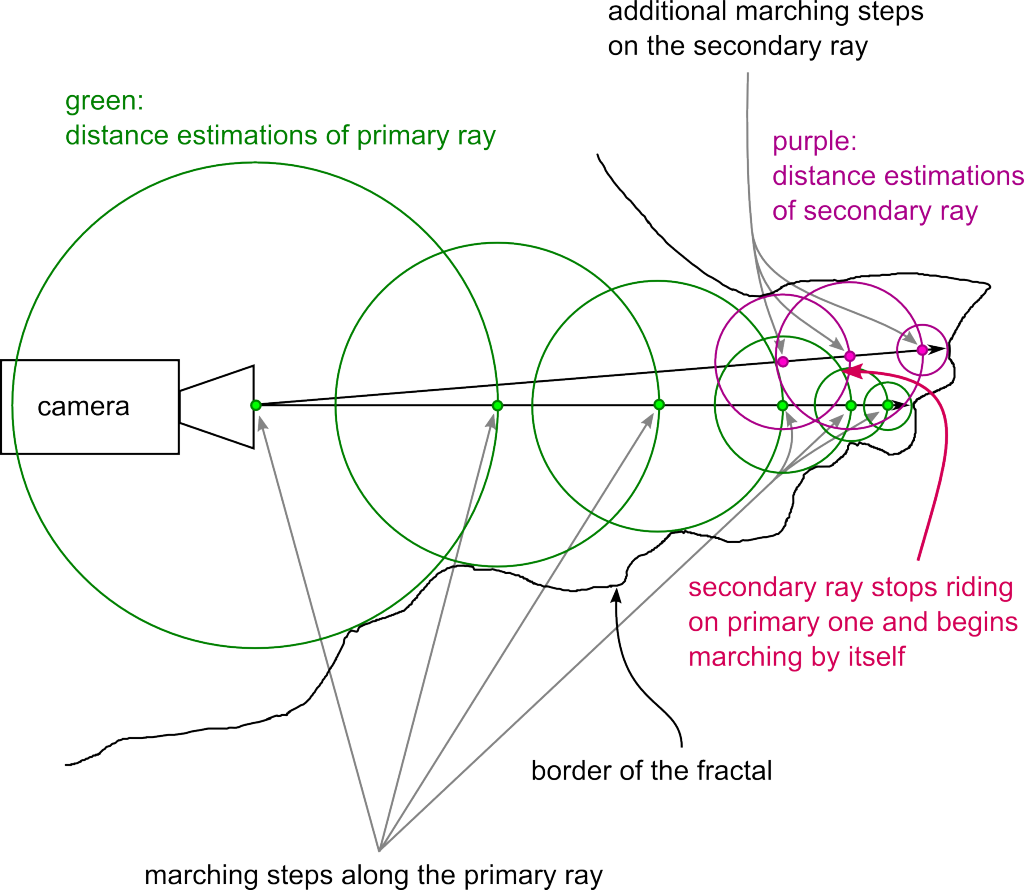

- The first is doing the ray tracing.

It is path tracing with only two bounces:

camera —–> surface —–> surface —–> light.

The first ray from the camera is not computed and taken from the geometry buffer instead. The last ray isn’t computed as well and taken from the shadow map instead. So only the middle ray is traced. Since indirect light usually contains only low frequencies, it is possible to compute it only in half of the resolution with only very little quality impact and big performance gain. On the half resolution image, 2-16 rays are computed per pixel (in the download you’ll find several executables, the quality refers to the number of rays per pixel. If I remember correctly, 4 rays per pixel were used for the video, this runs in real time (>30fps) on a modern GPU). For ray tracing itself I adapted a highly optimised kernel developed by Finnish NVIDIA engineers, along with a good BVH builder from the same source. - The next kernel filters the result from the ray tracing kernel. This filter is blurring, but it takes normals and distance into account, so that indirect light doesn’t get blurred over edges. The filter is not separable into a horizontal and vertical pass, because of the additional information taken into account. There were bandwidth and latency issues with the kernel and so I had to put quite some effort into optimising it.

- Finally there is the main shading kernel, which computes the traditional shading and adds the indirect light. This is quite strait forward, there is just a little catch. Since the indirect light is computed in a lower resolution, in some cases there where ugly aliasing effects. Imagine a dark polygon in the foreground and a bright, indirectly lit one in the background. This situation would result in 2×2 steps across the edge. This is alleviated by another blurring stage, which takes normals and distance from the higher resolution buffer into account. So on pixels on polygon edges, which would take the colour of the underlying 2×2 block from a different polygon before, now the filtering would only take the colour from the correct polygon.

After the CUDA part finished, the buffers are handed back to OpenGL for post-processing: basic tone mapping, bloom, lens flares. Since I based firefly on a previous project’s OpenGL engine, those effects were already implemented. I won’t go into details because the effects are pretty standard and there are already a lot of sources.

Conclusion

Ok, so usually one should write how cool it was and how good the method worked. I’m an honest person: It was cool to program, I learned really a lot, I won the first place, I don’t regret, but the approach is bad, don’t try it out :), here is why:

My university tutors feared that the switch between OpenGL and CUDA would be too expensive, but this was not the case. Naturally there are costs, but those are way under 1 ms (unpacking geometry data and writing the output in CUDA costs about 1 ms, which includes already some computation work, while ray tracing takes around 30 ms). So this was the positive side: you can switch between OpenGL and CUDA every frame, if you do it right, the performance will be OK.

But to understand why the approach is only mediocre, one needs to understand how ray tracing performs on the GPU. Almost parallel rays are fast, random rays are slow. The reason is that “similar” rays will need mostly the same elements from the Bounding Volume Hierarchy, execution and data divergence will be low. Random rays are the opposite. That’s why it’s possible to get more than 60 fps when shooting only primary rays, while shooting just one random ray per pixel from the surface originally brought the performance to under 10 fps. Similarly it should be possible to compute rays from the lamps to the surface in a fast way, but it might include sorting and I didn’t test that.

So the approach from above accelerates the fast part of a 2 bounce path tracing algorithm, but the slow part – computing a random ray from one surface to another – stays slow.

It would be much better – from a software engineering perspective – not to mix ray tracing and the traditional pipeline in this case, because costs are to high compared to the benefits. A lot of data is duplicated on the graphics card, it is cumbersome to program, tradeoffs make performance okeyish, but quality is not great (look at the flickering and noise). It’s just not production ready and it will never be. It’s better to wait another couple of years until GPUs will be fast enough to do real path tracing in real-time.

While starting to program, I was also thinking of implementing caustics. Those could produce really nice effects and be over 60 fps – depending on the quality of the caustics, which could justify ray tracing. If somebody tries that, please let me know about it in the comments. In my case I couldn’t do it due to time constraints.

On a side note, I wasn’t thinking about NVIDIA OptiX due to past experiences with it. I was quite satisfied with CUDA, a ray tracing library would be cool, but that’s a pretty big wish : )

Code and Executable

You can use my old repository directly. The last version of the demo code is tagged, there are a few more revisions for another lecture’s submission.

I have packed everything together into a zip file. There are Windows and on Linux versions. You’ll need an NVIDIA graphics card and recent drivers. You might also need CUDA and you might need to delete the CUDA library files, it’s hard to deploy to unknown systems, do whatever works for you :) . I used CUDA 6.5..